Abstract

Visual attention allows selecting relevant information from cluttered visual scenes and is largely determined by our ability to tune or bias visual attention to goal-relevant objects. Originally, it was believed that this top-down bias operates on the specific feature values of objects (e.g., tuning attention to orange). However, subsequent studies showed that attention is tuned to in a context-dependent manner to the relative feature of a sought-after object (e.g., the reddest or yellowest item), which drives covert attention and eye movements in visual search. However, the evidence for the corresponding relational account is still limited to the orienting of spatial attention. The present study tested whether the relational account can be extended to explain attentional engagement and specifically, the attentional blink (AB) in a rapid serial visual presentation (RSVP) task. In two blocked conditions, observers had to identify an orange target letter that could be either redder or yellower than the other letters in the stream. In line with previous work, a target-matching (orange) distractor presented prior to the target produced a robust AB. Extending on prior work, we found an equally large AB in response to relatively matching distractors that matched only the relative color of the target (i.e., red or yellow; depending on whether the target was redder or yellower). Unrelated distractors mostly failed to produce a significant AB. These results closely match previous findings assessing spatial attention and show that the relational account can be extended to attentional engagement and selection of continuously attended objects in time.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Attention modulates sensory and cognitive processes to select the most relevant objects for further in-depth processing in accord with current behavioral goals. Given the importance of attention for conscious perception and behavior, much research has been devoted to identifying how we orient attention. Traditionally, the allocation of attention to spatial locations and/or objects has been assumed to involve two functionally distinct processes. Attention is first oriented to objects and then engaged on that object or location (Folk, Ester, & Troemel, 2009; Posner, Cohen, & Rafal, 1982; Remington & Folk, 2001). Orienting is often conceptualized as shifting spatial attention (or the eyes) to a particular location (e.g., Posner, 1980), or as the appearance of an attentional gradient in a particular location (e.g., Downing, 1988; Weichselgartner and Sperling, 1996). Engagement of attention is characterized by visual processing of objects that leads to their classification or identification (e.g., Zivony & Eimer, 2020). Orienting attention to a location usually leads to engagement and feature processing, but not always: For example, using a variant of the spatial cuing task, Remington and Folk (2001) found that orienting attention to an object (as revealed by cue validity effects) did not ensure that the constituent feature properties of the object were processed (as evidenced by the lack of response compatibility effects from invalidly cued nontargets). Similarly, eye movement studies have shown that an erroneous eye movement to a location is often swiftly followed by a corrective saccade, without lingering at the location and with latencies shorter than the time needed to program an eye movement (which suggests that the corrective eye movement was programmed in parallel with the initial saccade; e.g., Findlay, Brown, & Gilchrist, 2001; Godijn & Theeuwes, 2002).

Orienting and reorienting without engagement seems especially likely when attention or the gaze is reflexively and nonintentionally oriented to a stimulus. Of note, orienting is determined by an interplay of two attentional control systems: (1) attention can be automatically drawn to salient stimuli, leading to an automatic, bottom-up, stimulus-driven prioritization of stimuli that have a high local feature contrast (e.g., Itti and Koch 2000, 2001; Theeuwes, 1992) and (2) attention can be tuned or biased in a top-down controlled manner to the features of goal-relevant items, such as the color of a sought-after item (e.g., Folk, Remington, & Johnston, 1992; Folk & Remington, 1998; Wolfe, 1994). Top-down control is subject to certain limitations, in that attention can only be tuned to specific elementary features (e.g., color, size, motion, orientation, shape; Treisman & Gelade, 1980; for an overview, see Wolfe, 1998). Consequently, other, irrelevant items that share this feature can reflexively attract attention (attentional capture; Folk et al., 1992; Folk & Remington, 1998). For instance, when looking for a red car, a red road sign can automatically or reflexively capture attention, which leads to erroneous selection of objects.

Originally, it was believed that attention is always top-down tuned to the exact feature value of the target (e.g., red, green; e.g., Duncan & Humphreys, 1989). According to these feature similarity views, all items that are similar to the target feature or features should be able to attract attention (e.g., Duncan & Humphreys, 1989; Martinez-Trujillo & Treue, 2004; Navalpakkam & Itti, 2007) or the gaze (e.g., Ludwig & Gilchrist, 2002).

However, subsequent research has shown that attention is often biased in a context-dependent manner to the relative target feature that the target has relative to other items in the surround (e.g., redder, larger, darker). According to the relational account (Becker, 2010), biasing attention to the relative feature may render selection more robust, as the exact feature values of objects exhibit high variability in natural environments (e.g., elementary colors and shapes vary with changes in the shading, distance, viewpoint). To eliminate this variability, the visual system computes how a prespecified target would differ from the context of irrelevant items, and biases attention to the relative feature of the target (e.g., redder, larger, darker; Becker, 2010; Becker, Folk, & Remington, 2013). For instance, in search for an orange target among yellow or green items, attention will be biased to the reddest item or all redder items, whereas the same orange target among mostly red item will lead to an attentional bias for the yellowest item or all yellower items (e.g., Becker, 2010). As a consequence of this “relational search,” an irrelevant distractor that matches the relative feature of the target (e.g., yellow) can attract attention and eye movements more strongly than a target-matching (e.g., orange) distractor, which has been shown in several studies using eye-movement recordings (e.g., Becker, Harris, Venini, & Retell, 2014), and studies that assessed covert attention shifts by behavioral validity effects (Becker, Folk, & Remington, 2010, 2013) or EEG (Schönhammer, Grubert, Kerzel, & Becker, 2016).

Subsequent studies showed that attention can also be tuned to the specific feature value of a target, which limits selection to only target-similar items, as prescribed by the feature similarity accounts (e.g., Becker et al., 2014; Harris, Remington, & Baker, 2013). However, feature-specific tuning is only observed in very specific and somewhat artificial conditions that render it impossible to locate the target in virtue of its relative features (e.g., when an orange target is randomly presented among all-red and all-yellow nontargets, so that it is unforeseeably redder or yellower; Becker et al., 2014; Harris et al., 2013). When the target feature and the likely context are known, attention is tuned to the relative target feature, indicating that relational search is the common or “default” mode of top-down tuning (Becker et al., 2014).

Although the initial orienting of spatial attention is biased by relational context rather than specific feature values, it is unclear whether the subsequent engagement of attention is also governed by context-dependent selection mechanisms, or may depend more on a feature-specific match (e.g., Becker et al., 2014; but see Martin & Becker, 2018).

The factors determining attentional engagement have previously been studied in rapid visual serial presentation (RSVP) tasks, in which attending to a stimulus leads to an attentional blink (AB; Raymond, Shapiro, & Arnell, 1992; Zivony & Eimer, 2020). In the task, participants are presented with a stream of briefly presented stimuli (e.g., letters) in the same location and continuously monitor the stream for a predefined target (or multiple targets). Presenting a target or a target-similar distractor prior to the (second) target (at Lag 2) typically impairs identification of the target (e.g., Folk, Leber, & Egeth, 2008; Raymond et al., 1992). This AB, or impairment in target identification, likely reflects the engagement of attention, as the RSVP task involves central presentation of a single stream of characters, and hence no need to orient spatial attention (e.g., Zivony & Eimer, 2020).

Similar to the orienting process, there is evidence that engagement is contingent on top-down control settings that specify the defining features of the target. For example, in a study by Folk et al. (2008), subjects searched for a specific colored target letter embedded in an RSVP stream of variably colored nontarget letters inside a box centered on fixation. On some trials, a distractor, consisting of a brief change in the color of the box, occurred at various temporal lags prior to the target. This distractor produced an AB, but only when it matched the target color, suggesting that attentional engagement was contingent on a top-down setting for a particular color.

Although the results of Folk et al. (2008) show that attentional engagement is contingent on top-down control settings, the nature of those control settings, especially when the target is defined (nominally) by a particular feature, is unclear. Specifically, because the target-similar items also always matched the relative features of the target, and target-dissimilar items failed to match the relative features of the target, it is unclear whether the attentional blink was due to observers adopting a top-down set for the specific feature value, or for the relative feature of the target. In the present study, we explicitly manipulated the featural or relational similarity between a nontarget distractor and the target, to identify whether engagement processes are feature or relationally based.

Experiment 1

To test whether attentional engagement operates on the target’s relative feature or its exact features value, we used an RSVP task, in which the target was either a red-orange letter embedded in a stream of other yellow-orange letters (redder target condition), or a yellow-orange letter embedded in a stream of red-orange letters (target yellower). The irrelevant distractor was a symbol that could have various different colors and was presented at different time points relative to the target.

We expected the AB to be maximal when it preceded the target by two letters (at Lag 2: distractor followed by nontarget, followed by target; e.g., Folk et al., 2002), and additionally included a Lag 4 condition, where the distractor preceded the target by three nontarget letters, to assess whether any of the distractors had particularly long-lasting effects on target detection. To avoid making the distractor symbols predictive of the target, we also included a lead condition, in which the distractor was presented after the target. In previous studies, the lead condition was used to assess the presence of an AB in the lag conditions (e.g., Dalton & Lavie, 2006). However, there is evidence that distractors appearing after the target can produce “late” disruptive effects that could contaminate AB measures (e.g., Most & Junge, 2008). Hence, in the present study, we included a “no distractor” control condition in which the distractor had same color as the nontargets, to extract impairments in performance caused by the differently colored distractors (see Folk et al., 2008, for a similar procedure).Footnote 1

To distinguish between a relational versus feature-specific account of the AB, we included distractors of different colors; a red, red-orange, yellow-orange, and yellow distractor that spanned the range from red to yellow, and a salient green distractor that was very dissimilar from the other colors. If engagement depends on exact feature value matches, we would expect an AB (i.e., significant decrement in performance at Lag 2) only for the distractors matching the target color (i.e., red-orange and yellow-orange in the respective target conditions). Conversely, if engagement operates on the same relational principles that drive spatial attention, we would expect an AB by both target-similar distractors and relatively matching distractors that matched the relative color of the target—that is, the red distractor in the redder target condition (where the target was red-orange), and the yellow distractor in the yellower target condition (where the target was yellow-orange).

Method

Participants

Twenty-five undergraduate students from University of Queensland (eight males, 17 females, Mage = 18.88, SD = 1.79 years) participated in a 60-minute experimental session for credit toward fulfillment of a class research requirement. All participants had normal or corrected-to-normal vision and normal color vision.

Apparatus

An Intel Duo 2 CPU 2.4 GHz computer with a 17-in. LCD color monitor was used to generate and display the stimuli and to control the experiment. Stimuli were presented with a resolution of 1,280 × 1,024 pixels and a refresh rate of 75 Hz. Participants were seated in a normally lit room and viewed the screen from a distance of approximately 60 cm.

Stimuli

All stimuli were displayed against a light-gray background. The RSVP stream consisted of a series of 18 colored letters (Arial Black, 17 pt) displayed one after another in the center of the screen, without blanks. The letters were randomly drawn (without replacement) from the alphabet, exempting the letters J, P, Q, and V (as these were deemed too confusable with other letters). The timing of stimuli was adjusted individually for each observer prior to the experiment, so that observers on average made a correct target identification on 75%–80% of all trials. In the experimental conditions, the average presentation duration of the letters was 123 ms, and it ranged from 90 to 200 ms across different observers.

To avoid that observers could find the target by attending to all deviants (singleton detection mode; Bacon & Egeth, 1994; Folk et al., 2008), and encourage attending to the color of the target, the color of all nontarget letters in the RSVP stream varied slightly (see Folk et al., 2008, for a similar method). In the redder target condition, the target was red-orange and presented among nontarget letters that were yellow-orange and slightly varied from RGB values of 255, 170, 0 to RGB values of 255, 220, 0. In the yellower target condition, the color assignment to target and nontargets was reversed, such that the target was now yellow-orange (RGB: 255, 170, 0) while the nontargets were red-orange, varying from RGB value of 255, 78, 0 to RGB values of 255, 128, 0. The target was always presented at a random location within the RSVP stream (Positions 6 to 11, out of 18 positions), which ensured that it was presented neither at the start nor at the end of the RSVP stream.

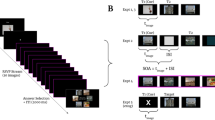

The irrelevant distractor was always a symbol and was randomly drawn from a pool of the following symbols: @, #, $, %, &, +, and ?. The distractor could be yellow (RGB: 255, 190, 0), yellow-orange (RGB: 255, 170, 0), red-orange (RGB: 255, 180, 0), red (RGB: 255, 70, 0), or green (RGB: 0, 180, 0). Figure 1.

Examples of the distractor symbols and colors used in the experiment (top) and an example of a trial (bottom). The example depicts the redder target condition, in which observers had to report a red-orange letter among yellow-orange letters of varying hues. An irrelevant distractor was presented at Lag 2, Lag 4, or with the target leading (by 2), and observers received immediate feedback whether or not they had identified the target letter correctly

Design

The experiment consisted of a 2 × 5 × 3 design, with the within-subjects variables “target color” (redder vs. yellower), “distractor color” (red, red-orange, yellow-orange, yellow, salient green), and target-distractor lag (Lag 2, Lag 4, and lead). The target color was blocked and the order of blocks counterbalanced across participants. The distractor color and target-distractor lag conditions varied randomly within a block, with the limitation that each distractor was presented an equal number of times at each lag condition (17 trials per cell).

Procedure

Participants were tested individually in a normally lit room. Prior to the experiment, participants were instructed to report an orange target letter that was either presented among all yellow-orange or all red-orange nontarget letters (depending on the condition). Participants were also informed about the possible appearance of a distractor symbol that could have varying colors, and instructed to try to ignore it, as it was irrelevant to the task and attending to it would harm performance. Participants were also instructed to keep their eyes on the fixation cross for the duration of the entire trial, and to respond as accurately as possible after the end of the trial (unspeeded response).

Each trial started with the presentation of the fixation cross in the middle of the screen (500 ms). A central letter stream with randomly selected letters was then quickly presented one after another, for a total of 18 letters. At the end of the sequence, observers were prompted to type in the corresponding letter of the target using the keyboard (unspeeded response). Immediately after the response, a feedback display was presented (500 ms) containing the written words “Correct” or “Wrong” (Arial, 14 pt), followed by a blank screen (250 ms). The next trial again started with the fixation cross. Participants completed 360 trials per target condition (720 trials in total), and were regularly encouraged to take a short break, to avoid fatigue.

Data

For the data analyses, the distractors were recoded in terms of their color relative to the target and nontarget stream items. When the red-orange and yellow-orange distractors matched the nontargets in the stream they were coded as a “no distractor” condition and were used as a control to evaluate a potential AB in the other distractor conditions (see Folk, et al., 2008). Distractors matching the target color were coded as target-similar distractors. Red and yellow distractors were labeled relative-matching or (relatively) opposite distractors, depending on whether the target was redder (red is relatively matching, yellow is relatively opposite) or yellower (yellow is relatively matching, whereas red is relatively opposite). Green distractors were coded as salient distractors with no relationship to the target.

Results

Accuracies were quite high in the task (74.4%), and ranged from 66.2% to 81.6%, with the individually adjusted presentation durations varying between 90 ms and 200 ms. A 2 × 3 × 5 analysis of variance (ANOVA), with the variables target color (redder, yellower), lag (2, 4, lead), and distractor color (relative-matching, target-similar, nontarget-similar, opposite, salient) computed over the mean accuracies showed no significant differences between redder and yellower targets, F < 1, but significant main effects of lag, F(2, 48) = 18.69, p < .001, ƞp2 = .44, and distractor color, F(4, 96) = 20.38, p < .001, ƞp2 =.46. All two-way interactions were significant, Target × Lag: F(2, 48) = 57.81, p < .001, ƞp2 = .71, Target × Distractor: F(4, 96) = 27.05, p < .001, ƞp2 = .53, Lag × Distractor, F(8, 192) = 33.52, p < .001, ƞp2 = .58, as was the three-way interaction, F(8, 192) = 47.23, p < .001, ƞp2 = .66.

As shown in Fig. 2a, the relative-matching and target-similar distractors both produced large decrements in target detection in the Lag 2 condition relative to the other distractor conditions, indicating that both the relative-matching and target-similar distractor produced a significant AB. To formally assess the presence of an AB in the data, we compared the data of each distractor with the corresponding lag of the no-distractor condition.Footnote 2 As this involved reusing the data from the no-distractor condition four times in each target condition, the alpha significance level was adjusted to α = .0125 (0.05 divided by 4).

a The mean target accuracies depicted separately for the two different target conditions (redder, yellower), distractors, and target–distractor lags. An AB, reflected in an impairment in performance at Lag 2, was evident for relatively matching distractors, target-similar distractors, and, to a lesser extent, for the salient green distractor. b The AB at Lag 2, computed as the difference value to the no distractor (at Lag 2); separately for the redder target (left) and yellower target (right), showed a significantly larger AB for the relative-matching distractor than for the target-similar distractor, which in turn had a larger AB than the opposite distractor. Error bars depict ±SEM and may be smaller than the plotting symbol. ***p < .001; **p < .01. Black asterisks show a significant AB; gray asterisks significant differences between conditions

First, considering the Lag 2 conditions, the results revealed a significant AB for the relative-matching distractor. redder target: t(24) = 6.9, p < .001, ƞp2 = .66; yellower target: t(24) = 14.9, p < .001, ƞp2 = .90, and the target-similar distractor, redder target: t(24) = 4.3, p < .001, ƞp2 = .43; yellower target: t(24) = 6.2, p < .001, ƞp2 = .62), relative to Lag 2 of the no-distractor condition. The salient distractor produced a significant AB in the redder target condition, t(24) = 3.4, p = .002, ƞp2 = .33, but not in the yellower target condition, t(24) = 2.1, p < .046, ƞp2 = .16, and the opposite distractor did not produce an AB with either target, redder target: t(24) = 1.7, p = .110; yellower target: t < 1.

Comparing the magnitude of the AB effects across the distractor conditions (see Fig. 2b) revealed that the AB of the relative-matching distractor was significantly larger than that of all other distractors, including the target-similar distractor (redder target: all ts > 3.0, ps ≤ .006; yellower target: all ts > 3.8, ps ≤ .001). Moreover, the AB of the target-similar distractor was larger than the AB of the salient distractor in the yellower target condition, t(24) = 4.7, p < .001, but not in the redder target condition, t < 1, and was always larger than the nonsignificant AB of the opposite distractor, redder target: all t(24) = 5.6, p < .001; yellower target: t(24) = 5.9, p < .001. The AB of the salient distractor was significantly larger than the (nonsignificant) AB of the opposite distractor in the redder target condition, t(24) = 4.6, p < .001, but did not differ from it in the yellower target condition, t(24) = 1.7, p = .10.

To assess whether any of the distractors had particularly long-lasting effects, we also compared the Lag 4 condition of all distractors to the Lag 4 condition of the no-distractor control condition, using the same adjusted alpha-level of α = .0125. In the redder target condition, the results mimicked those of the Lag 2 condition, with a significant AB for the relative-matching distractor, t(24) = 7.2, p < .001, the target-similar distractor, t(24) = 4.7, p < .001, and the salient distractor, t(24) = 3.9, p = .001, and no significant AB for the opposite distractor, t < 1. In the yellower target condition, only the relative-matching distractor significantly impaired target identification, t(24) = 7.7, p < .001, whereas the other distractors did not produce a significant AB at lag 4, all ts < 1.5, ps > .15. Thus, the time course of the AB seemed to depend both on the properties of the distractor and the target, with the most effective distractors showing particularly long-lasting effects in the redder target condition, but not so much in the yellower target condition.

General discussion

The present results support a relational account of attentional engagement: We found that a target-dissimilar distractor that matches only the relative color of the target produced a significant AB, and even a significantly larger AB than a target-similar distractor. These findings are at odds with the “standard view” of the AB, that items need to be similar to the target to produce a significant AB (e.g., Chartier, Cousineau, & Charbonneau, 2004; Chun & Potter, 1995; Duncan, Ward, & Shapiro, 1994; Shapiro et al., 1994; Ward, Duncan, & Shapiro, 1997; Zivony & Eimer, 2020), and indicate that attentional engagement operates on the same, relational principles as the orienting of attention and eye movements (Becker, 2010).

Salient, target-dissimilar distractors produced only weak and inconsistent effects, with a salient green distractor producing a significant AB in the redder target condition but not in the yellower target condition (and both being significantly weaker than the AB of the relatively matching and target-similar distractors). These findings closely match the results usually found in spatial attention, when observers have to search for a salient target with a particular color: In these experiments, too, we typically find that relatively matching distractors attract attention and the gaze most strongly, closely followed by target-similar distractors, and significantly less or no capture by salient items (e.g., Martin & Becker, 2018; York & Becker, 2020). This correspondence indicates that attentional orienting and attentional engagement share important characteristics, and that they are partly based on the same (relational) processes and mechanisms (see also Visser, Zuvic, Bischof, & Di Lollo, 1999).

This is an interesting result, as the visual search paradigm and the current RSVP task are commonly believed to tap into different processes: Visual search centrally requires the allocation of spatial attention (or gaze) to the target (i.e., orienting), whereas the RSVP task requires selectively allocating attentional resources in time. Moreover, a significant AB is only observed when an item has passed an initial “attentional filter” or “gate” and entered higher processing stages necessary for consolidation and decision-making (e.g., Dux & Marois, 2009; see also Bowman & Wyble, 2007; Chun & Potter, 1995; Olivers & Meeter, 2008). In addition, saliency in visual search refers to the feature contrast between search items that are simultaneously present, whereas in the RSVP task, it refers to the feature contrast between successively presented stimuli.

In this respect, it could also be questioned whether the AB of the salient distractor in the redder target condition was genuinely due to its bottom-up saliency, or whether attention may have been in part tuned to salient items in the redder condition (singleton detection mode; e.g., Bacon & Egeth, 1994; Folk et al., 2008; or color singleton detection mode; Harris, Becker, & Remington, 2015). To address this question, we computed the bivariate correlations between the AB effects of the different distractors at Lag 2 (using the same AB scores as above; see Fig. 2b). As shown in Fig. 3, the AB of the relative-matching distractor correlated significantly with the AB of the target-similar distractor, r(23) = .793, p < .01. However, neither distractor correlated significantly with the AB of the salient distractor, r(23) = .374, and r(23) = .307, both ps > .05. Fisher’s z test showed that the correlation between the AB of the relative-matching and target-similar distractor was significantly higher than the correlation between relatively matching and salient distractor, z = 2.94, p = .003, and target-similar and salient distractor, z = 2.48, p = .013. These findings suggest that the AB of the relative-matching and target-similar distractors were both due to a common top-down attentional mechanism, whereas the AB of the salient distractor was due to unrelated, possibly stimulus-driven, processes.

Scatterplots depicting the correlations in the AB between the two putative top-down-driven ABs (relative-matching, target-similar; left panel) and the correlations of either one with the salient distractor’s AB (right panels), in the redder target condition. The AB of the relative-matching distractor correlated significantly with the AB of the target-similar distractor (left panel), whereas the salient distractor’s AB was not significantly correlated with either the AB of the target-similar distractor (middle panel), or of the relative-matching distractor (right panel). This suggests that the AB of the salient distractor was not due to the same top-down processes that produced the AB of the relative-matching and target-similar distractor

These findings shed new light on previous discrepant results, with some studies reporting no significant AB by task-irrelevant salient items (Raymond et al., 1992; Seiffert & DiLollo, 1997) and others showing a significant AB, especially for target-similar distractors (Chun, 1997; Folk et al., 2008; Maki & Mebane, 2006; Spalek, Falcon, & DiLollo, 2006; Wee & Chua, 2004). The present study is one of the few that allows comparing target-similar and salient distractors and reveals that the AB of salient irrelevant distractors is fairly small and inconsistent, which can help explain why previous studies often failed to find a significant AB by salient distractors (see also Dux & Marois, 2009; Dux, Asplund, & Marois, 2009).

The rather weak and inconsistent AB of salient distractors mimic previous results in spatial attention (e.g., visual search; e.g., Becker, Lewis & Axtens, 2017; Becker, 2018; Kiss et al., 2012), and could be similarly explained by a “leaky filter” that does not reliably filter out all salient items (e.g., Raymond et al., 1992; see also Bowman & Wyble, 2007; Olivers & Meeter, 2008), the possibility of multiple, simultaneous top-down sets for features and singletons (Becker, Martin, & Hamblin-Frohman, 2019), or variability in top-down control settings that allows capture by salient items on trials where top-down control happens to be weak (Leber, 2010).

More importantly, the results of correlational analyses also corroborate that the effects of the relative-matching and target-similar distractor are due to a common, top-down mechanism. This provides additional support for the conclusion that the AB for the relative-matching distractor is larger because it better matches a relational top-down setting (rather than differences in bottom-up saliency). Again, these results closely resemble previous findings in visual search, which showed stronger attentional capture and gaze capture by relative-matching distractors than target-similar distractors (e.g., Becker et al., 2014; Martin & Becker, 2018).

The stronger AB by the relative-matching than target-similar distractor probably reflects the fact that the relative-matching distractor has a higher probability of getting selected and entering higher processing stages (e.g., working memory) because it better matches the (relational) target definition (e.g., Olivers & Meeter, 2008). Alternatively, the larger AB could also be due to relative-matching distractors depleting limited resources to a greater extent (in resource models of the AB; e.g., Chun & Potter, 1995), or being inhibited more strongly (possibly after producing a larger boost; e.g., Olivers & Meeter, 2008). Other mechanisms could also possibly explain the larger AB by the relative-matching distractor. While this question would require further research, the present results indicate important commonalities between spatial orienting in visual search and attentional engagement in the AB.

In conclusion, the present study provides the first evidence that feature-based attentional control settings that modulate the AB can operate on relative features rather than absolute feature values. Of course, it may be possible that engagement is not always driven by relational features; a purely feature-based AB may be observed if the context discourages relational strategies. Nonetheless the current results are the first to demonstrate a relationally based AB, and thus more broadly support a unified account of attentional orienting and engagement.

Author note

This research was supported by ARC Discovery Grant DP170102559 to S.I.B.

Open practices statement

All materials of the present study (procedures, experiment, and analysis program codes) and data will be made available to interested parties upon request. Please contact: s.becker@psy.uq.edu.au

Notes

The control distractor was still a symbol (not a letter), to ensure that differences between the experimental conditions and the control condition were solely due to differences in colour (not the shape of the items).

Using the lead conditions of each distractor as a control condition to assess the presence and magnitude of the AB yielded very similar results: Data available upon request.

References

Bacon, W. F., & Egeth, H. E. (1994). Overriding stimulus-driven attentional capture. Perception & Psychophysics, 55(5), 485–496. doi:https://doi.org/10.3758/BF03205306

Becker, S. I. (2010). The role of target-distractor relationships in guiding attention and the eyes in visual search. Journal of Experimental Psychology. General, 139(2), 247–265. doi:https://doi.org/10.1037/a0018808

Becker, S. I. (2018). Reply to Theeuwes: Fast, feature-based top-down effects, but saliency may be slow. Journal of Cognition, 1(28), 1–3.

Becker, S. I., Folk, C. L., & Remington, R. W. (2010). The role of relational information in contingent capture. Journal of Experimental Psychology: Human Perception and Performance, 36, 1460–1476.

Becker, S. I., Folk, C. L., & Remington, R. W. (2013). Attentional capture does not depend on feature similarity, but on target-nontarget relations. Psychological Science, 24(5), 634–647. doi:https://doi.org/10.1177/0956797612458528

Becker, S. I., Harris, A. M., Venini, D., & Retell, J. D. (2014). Visual search for color and shape: When is the gaze guided by feature relationships, when by feature values?, 40(1), 264–291. doi:https://doi.org/10.1037/a0033489

Becker, S. I., Lewis, A. J., & Axtens, J. E. (2017). Top-down knowledge modulates onset capture in a feedforward manner. Psychonomic Bulletin & Review, 24(2), 436–446. doi:https://doi.org/10.3758/s13423-016-1134-2

Becker, S. I., Martin, A., & Hamblin-Frohman, Z. (2019). Target templates in singleton search vs. feature-based search modes. Visual Cognition, 27, 502–517.

Bowman, H., & Wyble, B. P. (2007). The simultaneous type, serial token model of temporal attention and working memory. Psychological Review, 114, 38–70.

Chartier, S., Cousineau, D., & Charbonneau, D. (2004). A connexionist model of the attentional blink effect during a rapid serial visual presentation task. Proceedings of the International Conference on Cognitive Modelling, ICCM 2004, 64–69

Chun, M. (1997). Temporal binding errors are redistributed by the attentional blink. Perception & Psychophysics, 59, 1191-1199.

Chun, M. M., & Potter, M. C. (1995). A two-stage model for multiple target detection in rapid serial visual presentation. Journal of Experimental Psychology: Human Perception & Performance, 21, 109–127.

Dalton, P., & Lavie, N. (2006). Temporal attentional capture: Effects of irrelevant singletons on rapid serial visual search. Psychonomic Bulletin & Review, 13, 881–885.

Downing, C. J. (1988). Expectancy and visual-spatial attention: Effects on perceptual quality. Journal of Experimental Psychology: Human Perception and Performance, 14, 188–202.

Duncan, J., & Humphreys, G. W. (1989). Visual search and stimulus similarity. Psychological Review, 96(3), 433–458. doi:https://doi.org/10.1037/0033-295X.96.3.433

Duncan, J., Ward, R., & Shapiro, K. L. (1994). Direct measurement of attentional dwell time in human vision. Nature, 369, 313–315.

Dux, P. E., Asplund, C. L., & Marois, R. (2009). Both exogenous and endogenous target salience manipulations support resource depletion accounts of the attentional blink: A reply to Olivers, Spalek, Kawahara, and Di Lollo (2009). Psychonomic Bulletin & Review, 16, 219–224.

Dux, P. E., & Marois, R. (2009). The attentional blink: A review of data and theory. Attention, Perception, & Psychophysics, 71, 1683–1700.

Findlay, J. M., Brown, V., & Gilchrist, I. G. (2001). Saccade target selection in visual search: The effect of information from the previous fixation, Vision Research, 41, 87–95.

Folk, C. L., Ester, E. F., & Troemel, K. (2009). How to keep attention from straying: Get engaged! Psychonomic Bulletin & Review, 16, 127–132.

Folk, C. L., Leber, A. B., & Egeth, H. E. (2008). Top-down control settings and the attentional blink: Evidence for non-spatial contingent capture. Visual Cognition, 16, 616–642.

Folk, C. L., & Remington, R. (1998). Selectivity in distraction by irrelevant featural singletons: Evidence for two forms of attentional capture. Journal of Experimental Psychology: Human Perception and Performance, 24(3), 847–858. doi:https://doi.org/10.1037/0096-1523.24.3.847

Folk, C. L., Remington, R. W., & Johnston, J. C. (1992). Involuntary covert orienting is contingent on attentional control settings. Journal of Experimental Psychology: Human Perception and Performance, 18(4), 1030–1044. doi:https://doi.org/10.1037/0096-1523.18.4.1030

Folk, C.L., Leber, A.B., & Egeth, H.E. (2002). Made you blink! Contingent attentional capture produces a spatial blink. Perception & Psychophysics, 64, 741–753.

Godijn, R., & Theeuwes, J. (2002). Parallel programming of saccades: Evidence for a competitive inhibition model. Journal of Experimental Psychology: Human Perception and Performance, 28, 1039–1054.

Harris, A., Remington, R., & Becker, S. I. (2013). Feature specificity in attentional capture by size and color. Journal of Vision, 13(2013), 1–15. doi:https://doi.org/10.1167/13.3.12.doi

Harris, A. M., Becker, S. I., & Remington, R. W. (2015). Capture by colour: Evidence for dimension-specific singleton capture. Attention, Perception, & Psychophysics, 2305–2321. doi:https://doi.org/10.3758/s13414-015-0927-0

Itti, L., & Koch, C. (2000). A saliency-based search mechanism for overt and covert shifts of visual attention. Vision Research, 40(10-12), 1489–1506.

Itti, L., & Koch, C. (2001) Computational modelling of visual attention. Nature Reviews Neuroscience, 2, 194–203.

Kiss, M., Grubert, A., Petersen, A., & Eimer, M. (2012). Attentional capture by salient distractors during visual search is determined by temporal task demands. Journal of Cognitive Neuroscience, 24, 749–759.

Leber, A. (2010). Neural predictors of within-subject fluctuations in attentional control. The Journal of Neuroscience, 30(34), 11458–11465.

Ludwig, C. J. H., & Gilchrist, I. D. (2002). Stimulus-driven and goal-driven control over visual selection. Journal of Experimental Psychology: Human Perception and Performance, 28(4), 902–912. doi:https://doi.org/10.1037/0096-1523.28.4.902

Maki, W. S., & Mebane, M. W. (2006). Attentional capture triggers and attentional blink. Psychonomic Bulletin & Review, 13, 125–131.

Martin, A., & Becker, S. I. (2018). How feature relationships influence attention and awareness: Evidence from eye movements and EEG. Journal of Experimental Psychology: Human Perception and Performance, 44, 1865–1883.

Martinez-Trujillo, J. C., & Treue, S. (2004). Feature-based attention increases the selectivity of population responses in primate visual cortex. Current Biology, 14(1), 744–751. doi:https://doi.org/10.1016/j.cub.2004.04.028

Most, S. B., & Jungé, J. A., (2008). Don't look back: Retroactive, dynamic costs and benefits of emotional capture. Visual Cognition, 16(2–3), 262–278.

Navalpakkam, V., & Itti, L. (2007). Search goal tunes visual features optimally. Neuron, 53(4), 605–617. doi:https://doi.org/10.1016/j.neuron.2007.01.018

Olivers, C. N. L. & Meeter, M. (2008). A boost and bounce theory of temporal attention. Psychological Review, 115, 836–863.

Posner, M. I. (1980). Orienting of attention. Quarterly Journal of Experimental Psychology, 32, 3–25.

Posner, M. I., Cohen, Y., & Rafal, R. D. (1982). Neural systems control of spatial orienting. Philosophical Transactions of the Royal Society B, 298, 187–198.

Raymond, J. E., Shapiro, K. L., & Arnell, K. M. (1992). Temporary suppression of visual processing in an RSVP task: An attentional blink? Journal of Experimental Psychology: Human Perception & Performance, 18, 849–860.

Remington, R. W., & Folk, C. L. (2001). A dissociation between attention and selection. Psychological Science, 12, 511–515.

Schönhammer, J. G., Grubert, A., Kerzel, D., & Becker, S. I. (2016). Attentional guidance by relative features: Behavioral and electrophysiological evidence. Psychophysiology, 53, 1074–1083.

Seiffert, A. E., & Vincent Di Lollo, (1997). Low-level masking in the attentional blink. Journal of Experimental Psychology: Human Perception and Performance, 23(4), 1061–1073.

Shapiro K. L., Raymond, J E., & Arnell, K. M., (1994). Attention to visual pattern information produces the attentional blink in rapid serial visual presentation. Journal of Experimental Psychology: Human Perception and Performance, 20(2), 357–371.

Spalek, T. M., Falcon, L. J., & DiLollo, V. (2006). Attentional blink and attentional capture: Endogeneous versus exogeneous control over paying attention to two important events in succession. Perception & Psychophysics, 68, 674–684.

Theeuwes, J. (1992). Stimulus-driven capture and attentional set: Selective search for colour and visual abrupt onsets. Journal of Experimental Psychology: Human Perception and Performance, 20(4), 799–806.

Treisman, A., & Gelade, G. (1980). A feature integration theory of attention. Cognitive Psychology, 12, 97–136.

Visser, T. A. W., Zuvic, S., Bischof, W. F., & Di Lollo, V. (1999). The attentional blink with targets in different spatial locations. Psychonomic Bulletin & Review, 6, 432–436.

Ward, R., Duncan, J., & Shapiro, K. (1997). Effects of similarity, difficulty, and nontarget presentation on the time course of visual attention. Perception & Psychophysics, 59, 593–600.

Wee, S., & Fook K. Chua, F. K. (2004). Capturing attention when attention "blinks". Journal of Experimental Psychology: Human Perception and Performance, 30(3), 598–612.

Weichselgartner, E., & Sperling, G. (1996). Episodic theory of the dynamics of spatial attention. Psychological Review, 102, 503–532.

Wolfe, J. M. (1994). Guided search 2.0. A revised model of visual search. Psychonomic Bulletin & Review, 1(2), 202–238. doi:https://doi.org/10.3758/BF03200774

Wolfe, J. M. (1998). Visual search. In H. Pashler (Ed.). Attention (pp. 30–73). London, UK: University College London Press.

York, A., & Becker, S. I. (2020). Top-down modulation of gaze capture: Feature similarity, optimal tuning or tuning to relative features? Journal of Vision, 20(4), 1–16.

Zivony, A., & Eimer, M. (2020). Distractor intrusions are the result of delayed attentional engagement: A new temporal variability account of attentional selectivity in dynamic visual tasks. Journal of Experimental Psychology: General. doi:https://doi.org/10.1037/xge0000789

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Becker, S.I., Manoharan, R.T. & Folk, C.L. The attentional blink: A relational accountof attentional engagement. Psychon Bull Rev 28, 219–227 (2021). https://doi.org/10.3758/s13423-020-01813-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13423-020-01813-9