Abstract

Purpose

Statistical approaches have been developed to detect bias in individual trials, but guidance on how to detect systematic differences at a meta-analytical level is lacking. In this paper, we elucidate whether author bias can be detected in a cohort of randomized trials included in a meta-analysis.

Methods

We utilized mortality data from 35 trials (10,880 patients) included in our previously published meta-analysis. First, we linked each author with their trial (or trials). Then we calculated author-specific odds ratios using univariate cross table methods. Finally, we tested the effect of authorship by comparing each author’s estimated odds ratio with all other pooled estimated odds ratios using meta-regression.

Results

The median number of investigators named as authors on the primary trial reports was six (interquartile range: 5-8, range: 2-32). The results showed that the slope of author effect for mortality ranged from − 1.35 to 0.71. We identified only one author team showing a marginally significant effect (− 0.39; 95% confidence interval, − 0.78 to 0.00). This author team has a history of retractions due to data manipulations and ethical violations.

Conclusion

When combining trial-level data to produce a pooled effect estimate, investigators must consider sources of potential bias. Our results suggest that systematic errors can be detected using meta-regression, although further research is needed to examine the sensitivity of this model. Systematic reviewers will benefit from the availability of methods to guard against the dissemination of results with the potential to mislead decision-making.

Résumé

Objectif

Des approches statistiques ont été élaborées pour détecter les biais dans les essais individuels, mais nous manquons d’orientations sur les méthodes à utiliser pour les détecter au niveau des méta-analyses. Dans cet article, nous étudions s’il est possible de détecter des biais liés à l’auteur dans un ensemble d’essais randomisés inclus dans une méta-analyse.

Méthodes

Nous avons utilisé les données sur la mortalité tirées de 35 essais (10 880 patients) inclus dans notre méta-analyse publiée antérieurement. Nous avons tout d’abord lié chaque auteur à son étude (ou à ses études). Nous avons ensuite calculé des rapports de cotes (odds ratios) spécifiques utilisant des méthodes de tableaux unifactoriels croisés. Enfin, nous avons testé l’effet « auteur » en comparant les rapports de cotes estimés de chaque auteur avec le rapport de cotes groupé de tous les autres auteurs au moyen d’une métarégression.

Résultats

Le nombre médian d’investigateurs cité comme auteurs dans les publications principales des essais était de six (plage interquartile : 5 à 8, limites : 2 à 32). Les résultats ont montré que la pente de l’effet « auteur » pour la mortalité allait de -1,35 à 0,71. Nous n’avons identifié qu’une seule équipe d’auteurs ayant un effet peu à la limite de la significativité (-0,39; intervalle de confiance à 95 % : -0,78 à 0,00). Cette équipe a un historique de rétractions de publications en raison de manipulations des données et de violations de l’éthique.

Conclusion

Lorsqu’ils combinent les données des essais pour produire une estimation groupée de l’effet, les investigateurs doivent envisager les sources de biais potentiels. Nos résultats suggèrent que des erreurs systématiques peuvent être détectées en utilisant une métarégression bien qu’il soit nécessaire de poursuivre les recherches pour évaluer la sensibilité de ce modèle. Les réviseurs systématiques tireront parti de la disponibilité de méthodes les protégeant de la dissémination de résultats susceptibles de fausser des prises de décision potentielles.

Similar content being viewed by others

Systematic reviews “attempt to collate all empirical evidence that fits pre-specified eligibility criteria in order to answer a specific research question”1 to minimize the negative effects of different biases. Even so, the trustworthiness of this scientific evidence can be skewed by evidence arising from biased primary literature.2 Author bias—a systematic difference introduced by an investigator’s prior knowledge, beliefs, opinions, academic pressure to publish, or relationships (e.g., financial)—can potentially distort research findings, including the presentation of results and conclusions.3,4,5

Author bias may result from data manipulation and/or fabrication, academic pressure to publish, effect of study sponsorship, and professional competitiveness.3,6,7 The prevalence and impact of this specific bias is unclear and methods for detection have only recently been suggested,8,9,10 but are rarely applied. Author bias can be difficult to detect due to its co-presentation with other forms of bias. For example, conformational bias,11,12,13,14,15 whereby authors selectively report only those results that support their previously held beliefs, can present as selective outcome reporting bias or as publication bias. These biases are known to influence medical decision-making,16,17 and can be associated with adverse patient outcomes.18,19

One of the most highly publicized cases of author bias involves the work of Joachim Boldt. In 2011, a wide-ranging group of journal editors agreed to retract 88/102 (86%) of Dr. Boldt’s publications since 1999 due to scientific misconduct evidenced by failure to acquire ethical approval for research,20 and data fabrication.21 As of today, at least 94 of Dr. Boldt’s publications have been retracted.22

In contrast to Dr. Boldt’s findings, large well-designed randomized trials23,24 have demonstrated increased mortality and renal failure associated with hydroxyethyl starch when used for fluid resuscitation. During meta-analysis,25 a clear signal of harm associated with hydroxyethyl starch remained obfuscated by data from Dr. Boldt’s un-retracted studies that were published prior to 1999.

The objective of this study was to determine whether meta-regression can be used to identify author bias in a cohort of published trials that includes an author subsequently subject to retraction for misconduct.

Methods

Data source and study design

We included trial-level data from a published systematic review of randomized trials, with similar patient populations,25 that evaluated the efficacy and safety of hydroxyethyl starch in critically ill patients requiring acute volume resuscitation. We collated, indexed, and cross-referenced the author lists from each trial publication to link investigators with the publications they authored. Some authors were investigators in a single trial; others were co-investigators in multiple trials. If all the authors on a paper were not co-authors on any other papers, then they were grouped together as an “author team”. At the same time, we did not consider an author to be part of an author team if he/she was a co-investigator/author on a paper without the rest of the author team. In this case, the author was considered a unique author, and the remaining authors that published together were considered a unique author team. As such, the original number of unique authors (n = 235) was reduced to 43 unique authors/author teams.

Statistical analysis

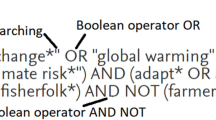

Regression analyses test the association between an outcome measure and one or more covariates in individual clinical studies. Meta-regression, an extension of regression analyses to pooled data, is used to test the association of one or more covariates with outcome measures in a meta-analysis. We used the objective outcome “mortality” as it contained evaluable data from more than ten trials; given the general rule of thumb for meta-regression that there are at least ten observations per covariate to ensure that the model does not overfit the data.1

In our study, we used a stepwise mixed-effects linear meta-regression model (method of moments),26,27 with one binary covariate (“author/author team” being either present or absent). The unit of analysis was the individual trial. We used a two-step approach for analysis. In the first step, we linked each author with their trial (or trials). In the second step (meta-regression), we tested the effect of authorship by comparing each author’s estimated odds ratio with all other pooled estimated odds ratios using meta-regression. The predictor X is the “author effect” (covariate) and the outcome measure is the overall log odds. The definition of the covariate was modified to reflect each author’s presence/absence. No analysis included multiple covariates. We evaluated the direction and magnitude of effect. In addition, we evaluated the significance of the effect using the 95% confidence intervals (CI). All analyses were conducted using Excel (version 15, Microsoft corporation), Review Manager (RevMan 5.3.5, Cochrane), and Comprehensive Meta-Analysis (Version 2.0, Biostat Inc.).

Results

We extracted data from 35 trials (10,880 patients) that reported on mortality. The median number of investigators named as authors on the primary trial reports was six (interquartile range: 5-8, range: 2-32). We identified 235 unique authors. While most investigators were named as authors on only one included trial, nine investigators authored more than one primary trial report (median: 2 trials, range: 2-7). Since most investigators only authored one trial report, we considered all investigators that authored a single trial together collectively as one author/author team. This reduced the number of authors for the analyses to 43 unique authors/author teams; thereby 43 different meta-regression models. For five authors/author teams, meta-regression was not possible due to low event rates.

The results showed that the slope of the regression analysis of the author covariate for mortality ranged from − 1.35 to 0.71. The Figure shows the corresponding slopes for the effects of each author/author team. We identified only one single author team showing a marginally significant effect (− 0.39; 95% CI, − 0.78 to 0.00). This author team has a history of retractions due to data manipulations and ethical violations.

Discussion

In a post-hoc analysis of a single systematic review, this study demonstrates that meta-regression can identify author bias in a cohort of trials that includes an author subsequently subject to retraction for misconduct.

In the original study publication,25 the magnitude of bias was sufficient to affect the summary effect measure for mortality—a highly important, patient-centered objective outcome. The odds ratio (OR) for death among patients randomized to receive hydroxyethyl starch was 1.09 (95% CI, 1.00 to 1.20), but when we excluded data from an investigator whose subsequent research had been retracted because of scientific misconduct, the risk of mortality increased significantly (OR, 1.12; 95% CI, 1.02 to 1.24). Our study calls attention to the potential for author bias to influence the interpretation of outcomes, and highlights a potential method for its detection.

Bias is a necessary consideration when interpreting the results of research findings, including clinical trials of therapeutic interventions.28 As our understanding of meta-epidemiology advances, so does our desire to ensure that research is bias-free. The possibility of biased (or even fraudulent) data contaminating a meta-analysis complicates assessment.

At an organizational level, researchers are often unsure how to manage data that are potentially biased or even fraudulent.29 Even when publications are retracted, the ultimate decision of whether to include their data in a meta-analysis is unclear.29 Research institutions and editorial boards remain divided on how to guide researchers in dealing with authors that are known to be involved in academic misconduct. For example, two Cochrane review groups (Injuries Group and Pain, Palliative and Supportive Care Group) have indicated that sensitivity analyses were routinely conducted with/without data from Boldt trials to allow the readers to assess the potential impact on conclusions. One additional review30 excluded all Boldt trials, although it was not clear whether this was a general review group policy or at the discretion of the individual reviewers. The authors further suggested that all publications from authors guilty of research misconduct should be censored.

The data used for this study came from trials that were reported to be randomized-controlled trials with similar populations, interventions, comparators, and settings, and all measured an objective outcome (mortality). They were identified through a systematic process that is described in detail in our previous publication.25 We have previously provided subgroup and meta-regression analyses showing that the source of heterogeneity could not be isolated to one element of the standard PICOS (population, intervention, comparator, outcomes, study), industry sponsorship, or other expected sources of potential bias.25

The main strength of our methods is the use of a meta-regression model to estimate the risk of author bias; a commonly understood, utilized, and accepted method to better understand whether or not study-level factors influence the pooled estimate of effect. In comparison, complex methods based on bootstrap models9 and Bayesian probabilistic approaches8,29 have also been proposed and may reach similar conclusions. The results of meta-regression models have not been compared with those of these complex methods on the same data set to detect author bias.

As with any statistical method, limitations exist. The biggest limitation of this pilot study is that it confirms author bias using data from only a single cohort of trials from a single meta-analysis. This limitation will need to be addressed using data from other published meta-analyses and simulation studies. Another important limitation of this method is the variably low power to detect a difference given that the final sample size depends on the number of trials, not particularly the number of participants within the trial. In other words, it would be much more difficult to detect potential author bias if an author has a small number of publications compared with an author who is highly published on a certain topic. For example, other review authors/teams had negative and positive slopes without reaching statistical significance. Without additional included publications by these authors/teams we cannot determine accurately whether their results are significantly different from others in the same analysis. Furthermore, these analyses require an adequate total number of trials regardless of the number per author. Even this minimal number of trials to detect bias is unclear. As with any tests with low power, the results of this study should be viewed with caution.

This methodology was specifically chosen 1) for its simplicity and availability in most available meta-analytic software packages; and 2) as additional covariates would differ from meta-analysis to meta-analysis and could not be standardized across studies.

Finally, it is likely not possible to truly confirm data manipulation, fraud, or author bias using published summary data without verifying the individual patient data. We believe the potential role of systematic reviews (using all available techniques, including meta-regression) would be to flag/highlight research that is systematically different from evidence produced by other researchers/research teams; differences that can then be explored in more detail to understand the root causes of these differences.

Conclusion

Our results suggest that potential author bias may be detected using readily available statistical methods, including meta-regression. Further evidence is needed to characterize the sensitivity and optimal use of this technique to detect risk of author bias in published reports. Guarding against the dissemination of misleading results and potential to mislead healthcare decision-making, systematic reviewers should routinely investigate the potential for author bias prior to conducting other analyses of their data.

References

Higgins JP, Green S. Cochrane handbook for systematic reviews of interventions. Version 5.1. 0. The Cochrane Collaboration 2011. Available from URL: https://handbook-5-1.cochrane.org/ (accessed October 2018).

Lundh A, Sismondo S, Lexchin J, Busuioc OA, Bero L. Industry sponsorship and research outcome. Cochrane Database Syst Rev 2012; 12: MR000033.

Bastian H. “They would say that, wouldn’t they?” A reader’s guide to author and sponsor biases in clinical research. J R Soc Med 2006; 99: 611-4.

Buchter RB, Pieper D. Most overviews of Cochrane reviews neglected potential biases from dual authorship. J Clin Epidemiol 2016; 77: 91-4.

Singh JP, Grann M, Fazel S. Authorship bias in violence risk assessment? A systematic review and meta-analysis. PLoS One 2013; 8: e72484.

Fanelli D. Do pressures to publish increase scientists’ bias? An empirical support from US States Data. PLoS One 2010; 5: e10271.

Baerlocher MO, O’Brien J, Newton M, Gautam T, Noble J. Data integrity, reliability and fraud in medical research. Eur J Intern Med 2010; 21: 40-5.

Carlisle JB. The analysis of 168 randomised controlled trials to test data integrity. Anaesthesia 2012; 67: 521-37.

Pirracchio R, Resche-Rigon M, Chevret S, Journois D. Do simple screening statistical tools help to detect reporting bias? Ann Intensive Care 2013; 3: 29.

Hein J, Zobrist R, Konrad C, Schuepfer G. Scientific fraud in 20 falsified anesthesia papers : detection using financial auditing methods. Anaesthesist 2012; 61: 543-9.

Mendel R, Traut-Mattausch E, Jonas E, et al. Confirmation bias: why psychiatrists stick to wrong preliminary diagnoses. Psychol Med 2011; 41: 2651-9.

Munro GD, Stansbury JA. The dark side of self-affirmation: confirmation bias and illusory correlation in response to threatening information. Pers Soc Psychol Bull 2009; 35: 1143-53.

Jonas E, Schulz-Hardt S, Frey D, Thelen N. Confirmation bias in sequential information search after preliminary decisions: an expansion of dissonance theoretical research on selective exposure to information. J Pers Soc Psychol 2001; 80: 557-71.

Goodyear-Smith FA, van Driel ML, Arroll B, Del Mar C. Analysis of decisions made in meta-analyses of depression screening and the risk of confirmation bias: a case study. BMC Med Res Methodol 2012; 12: 76.

Pines JM. Profiles in patient safety: confirmation bias in emergency medicine. Acad Emerg Med 2006; 13: 90-4.

Bekelman JE, Li Y, Gross CP. Scope and impact of financial conflicts of interest in biomedical research: a systematic review. JAMA 2003; 289: 454-65.

Djulbegovic B, Lacevic M, Cantor A, et al. The uncertainty principle and industry-sponsored research. Lancet 2000; 356: 635-8.

Trelle S, Reichenbach S, Wandel S, et al. Cardiovascular safety of non-steroidal anti-inflammatory drugs: network meta-analysis. BMJ 2011; 342: c7086.

Ross JS, Hill KP, Egilman DS, Krumholz HM. Guest authorship and ghostwriting in publications related to rofecoxib: a case study of industry documents from rofecoxib litigation. JAMA 2008; 299: 1800-12.

EIC Joint Statement. Editors-in-Chief Statement Regarding Published Clinical Trials Conducted without IRB Approval by Joachim Boldt - 2011. Available from URL: https://www.springer.com/cda/content/document/cda_downloaddocument/EIC+Joint+Statement+_20110210.pdf?SGWID=0-0-45-1077337-p173850204 (accessed October 2018).

Shafer SL. Shadow of doubt. Anesth Analg 2011; 112: 498-500.

Abritis A. Retraction Watch - 2015. Available from URL: http://retractionwatch.com/2015/10/12/boldts-retraction-count-upped-to-94-co-author-takes-legal-action-to-prevent-95th/ (accessed October 2018).

Myburgh JA, Finfer S, Bellomo R, et al. Hydroxyethyl starch or saline for fluid resuscitation in intensive care. N Engl J Med 2012; 367: 1901-11.

Perner A, Haase N, Guttormsen AB, et al. Hydroxyethyl starch 130/0.42 versus Ringer’s acetate in severe sepsis. N Engl J Med 2012; 367: 124-34.

Zarychanski R, Abou-Setta AM, Turgeon AF, et al. Association of hydroxyethyl starch administration with mortality and acute kidney injury in critically ill patients requiring volume resuscitation: a systematic review and meta-analysis. JAMA 2013; 309: 678-88.

Sterne JA, Juni P, Schulz KF, Altman DG, Bartlett C, Egger M. Statistical methods for assessing the influence of study characteristics on treatment effects in ‘meta-epidemiological’ research. Stat Med 2002; 21: 1513-24.

Borenstein M, Hedges LV, Higgins JP, Rothstein HR. Meta-regression. In: Borenstein M, Hedges LV, Higgins JP, Rothstein HR (eds).Introduction to Meta-Analysis: John Wiley & Sons, Ltd; 2009: 187-203.

Guyatt G, Rennie D. Users’ Guides to the Medical Literature: a Manual for Evidence-Based Clinical Practice. AMA press Chicago; 2002.

Carlisle J, Pace N, Cracknell J, Moller A, Pedersen T, Zacharias M. What should the Cochrane Collaboration do about research that is, or might be, fraudulent? Cochrane Database Syst Rev 2013; 5: ED000060.

Mutter TC, Ruth CA, Dart AB. Hydroxyethyl starch (HES) versus other fluid therapies: effects on kidney function. Cochrane Database Syst Rev 2013; 7: CD007594.

Acknowledgements

Ryan Zarychanski and Alexis F. Turgeon receive salary support and operating funds from the Canadian Institutes of Health Research (CIHR). Lisa Lix is supported by a Manitoba Research Chair from Research Manitoba and a Foundation Scheme grant from CIHR. These funding agencies had no role in the design or conduct of the study, including but not limited to, study identification, collection, management, analysis, and interpretation of the data, or preparation, review, or approval of the final report.

Conflicts of interest

None declared.

Editorial responsibility

This submission was handled by Dr. Gregory L. Bryson, Deputy Editor-in-Chief, Canadian Journal of Anesthesia.

Author contributions

Ahmed Abou-Setta, Rasheda Rabbani, Lisa Lix, and Ryan Zarychanski contributed substantially to all aspects of this manuscript, including study conception and design; acquisition, analysis, and interpretation of data; and drafting the article. Alexis Turgeon, Brett Houston, and Dean Fergusson contributed substantially to the interpretation of data.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Abou-Setta, A.M., Rabbani, R., Lix, L.M. et al. Can authorship bias be detected in meta-analysis?. Can J Anesth/J Can Anesth 66, 287–292 (2019). https://doi.org/10.1007/s12630-018-01268-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12630-018-01268-6